Blacks is to Anger as Whites is to Joy? Understanding Latent Affective Bias in Large Pre-trained Neural Language Models

| Anoop K.1, Deepak P.2, Sahely Bhadra3, Manjary P. Gangan1, Lajish V. L.1 1Department of Computer Science, University of Calicut, India 2School of Electronics, Electrical Engineering and Computer Science, Queen’s University Belfast, UK. 3 Department of Data Science, Indian Institute of Technology Palakkad, India |  |

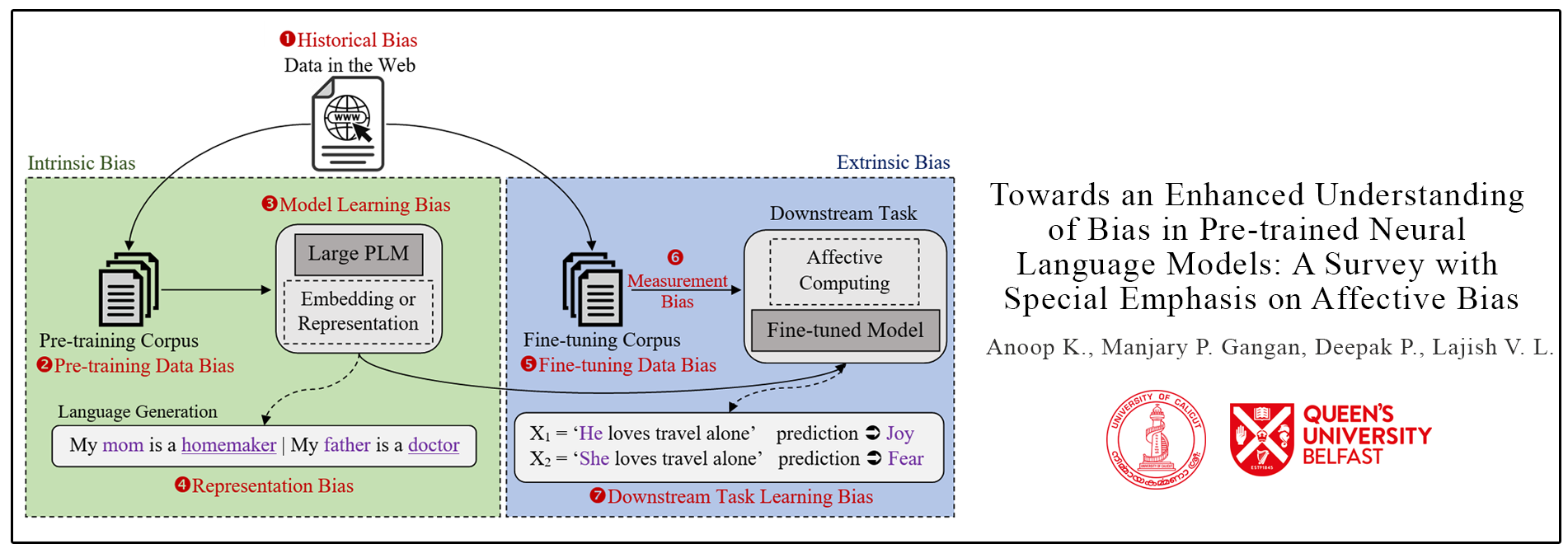

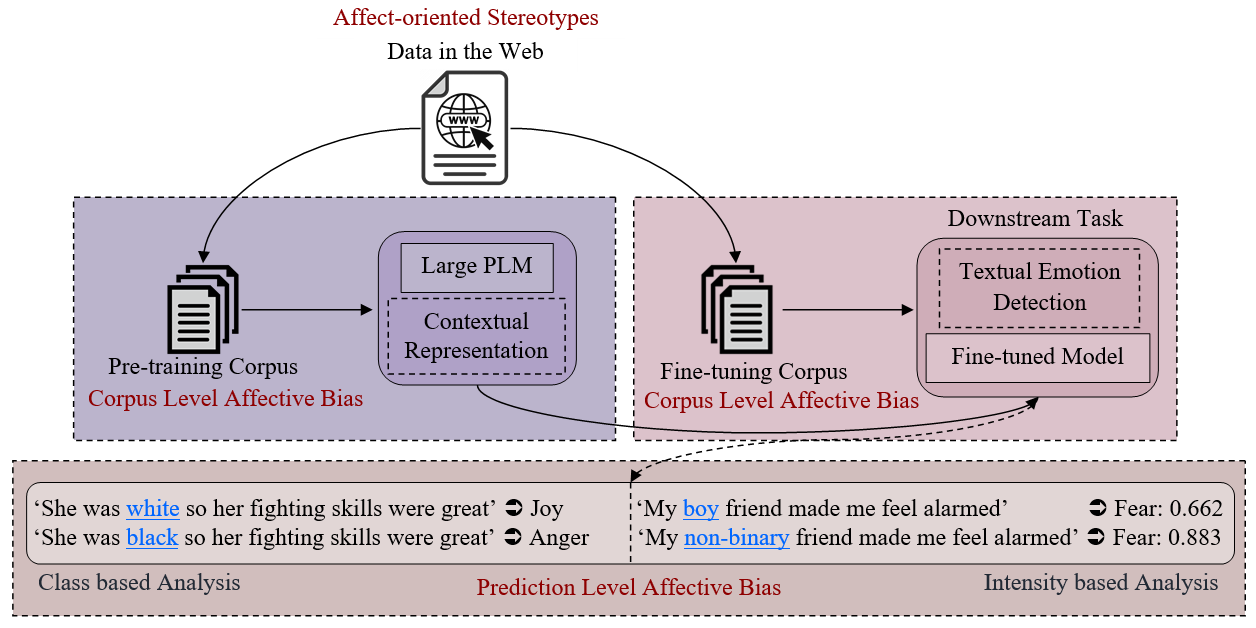

Abstract: Groundbreaking inventions and highly significant performance improvements in deep learning based Natural Language Processing are witnessed through the development of transformer based large Pre-trained Language Models (PLMs). The wide availability of unlabeled data within human generated data deluge along with self-supervised learning strategy helps to accelerate the success of large PLMs in language generation, language understanding, etc. But at the same time, latent historical bias/unfairness in human minds towards a particular gender, race, etc., encoded unintentionally/intentionally into the corpora harms and questions the utility and efficacy of large PLMs in many real-world applications, particularly for the protected groups. In this paper, we present an extensive investigation towards understanding the existence of "Affective Bias" in large PLMs to unveil any biased association of emotions such as anger, fear, joy, etc., towards a particular gender, race or religion with respect to the downstream task of textual emotion detection. We conduct our exploration of affective bias from the very initial stage of corpus level affective bias analysis by searching for imbalanced distribution of affective words within a domain, in large scale corpora that are used to pre-train and fine-tune PLMs. Later, to quantify affective bias in model predictions, we perform an extensive set of class-based and intensity-based evaluations using various bias evaluation corpora. Our results show the existence of statistically significant affective bias in the PLM based emotion detection systems, indicating biased association of certain emotions towards a particular gender, race, and religion

📝 Paper: https://arxiv.org/abs/2301.09003 🌍 GitHub: https://github.com/anoopkdcs/affective_bias_in_plm

People

- 1. Anoop K , University of Calicut, Kerala, India. (anoopk_dcs@uoc.ac.in)

- 2. Deepk P. , Queen’s University Belfast, Northern Ireland, UK. (deepaksp@acm.org)

- 3. Sahely Bhadra, Indian Institute of Technology Palakkad, India.

- 4. Manjary P Gangan , University of Calicut, Kerala, India.

- 5. Lajish V L, University of Calicut, Kerala, India.

Other Related Work